AWS Web Talk: Intelligent Cloud Orchestration and Container Program Overview

Transcript:

Hi, I’m Todd Bernson – CTO at Blue Sentry Cloud and an AWS Ambassador with 12 AWS certifications.

I’m often asked by Executives to explain Cloud-native architectures so I’ve put together a multi-part series explaining common patterns and Technical jargon like container orchestration, streaming applications, and event-driven architectures.

In this installment, I’ll be breaking down another common Cloud-native application architecture.

This is container orchestration. Containers have been around for only about a decade.

Docker was released in 2013 and was created to stop the issue heard across all Dev teams to that point.

A common saying was “Well it works on my laptop”.

This put the exact same runtimes packages and application versions all the way through development and all of the team members’ computers.

Soon after, container orchestration tools were released.

Tools such as Docker Swarm or most notably based on Google’s org, Kubernetes was released in 2015.

Container orchestration allows for running virtualization inside of virtualization.

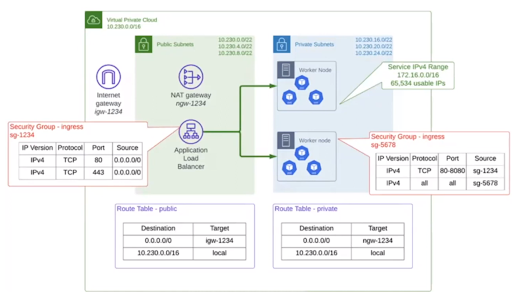

Here we see a very simple EKS architecture on AWS:

We can see the VPC that holds all of our Kubernetes architecture.

Inside of that, we have an internet gateway, a knack Gateway, an application load balancer that are publicly facing.

On a private subnet, we have ec2 instances that are acting as EKS worker nodes, and inside of each of these worker nodes are the pods and the containers that we’ll talk about in just a little bit that are virtualized services and machines inside the actual virtual machine that the ec2 instance.

Scalable pods, which are containers running microservices or jobs, are treated just like cattle.

If a pod becomes unhealthy it’s just rebooted or even destroyed while Kubernetes quickly brings up another pod automatically.

Ingresses and services help networking and work based off of tags that deployments pods or Services have.

There are also many security features such as role-based access control and network policies that help to ensure East-West security inside of your cluster.

Kubernetes has allowed for app deployment and scaling to happen in seconds instead of the minutes that ec2 instances or virtual machines take.

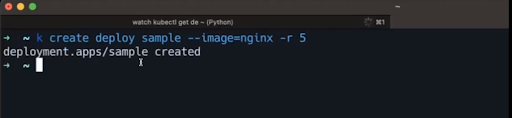

I’ve brought up my terminal window that’s connected to an EKS cluster and I’m going to show you just how quickly the Kubernetes deployment can go.

If I’m using Cube control here and I just create a deployment called sample with image nginx and I’m going to create five pods, five replicas off of this.

I could create 10,000 replicas off of this but I’m going to have five for our resiliency here

I create that and we can watch as this gets created:

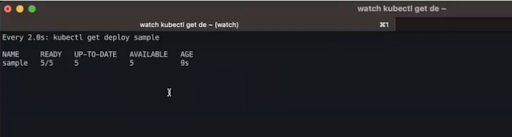

In just a matter of six seconds, we have all five pods up.

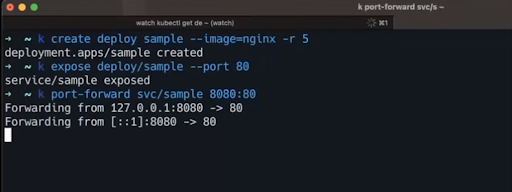

Now what does that mean? Let’s look at how we can create a service that points to this deployment and we’re going to expose it on Port 80.

We’ll simply do a quick port forward and pop over to our web browser.

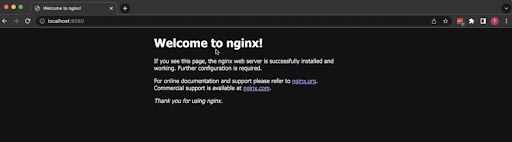

Now as we can see if we come to http localhost on port 8080, which is where our port forward was going, we can see our nginx deployment is fully deployed.

In a matter of under 10 seconds we were able to deploy our application it’s exactly the same across all five replicas, or 50, or 5,000 replicas, and if I wanted to deploy a different version of my application then I can do that and Kubernetes will take care of rolling all of that out for me.

I’ll quickly stop that port forward and go over to my other tab and we can still see that in just a couple minutes we still have our five replicas running.

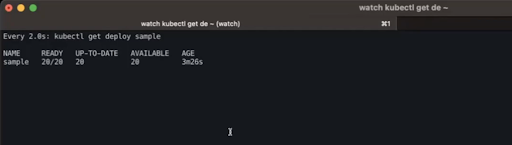

Now if I wanted to scale this up to let’s say 20 – how long would that take?

We can wait just a few seconds here, see that we’ve doubled and that we’ve doubled again.

In under 10 seconds, we have scaled from five pods to be able to take this traffic, to 20 pods able to take this traffic.

Container orchestration allowed for a completely new way of continuous delivery with the get Ops model.

This is a discussion to go deeper into another time, but from a high level, git Ops allows developers to commit their code and have it automatically deployed from the git Ops tool.

Tools like Argo CD are configured to check for any changes in the git repo and deploy those changes.

Any changes to the infrastructure that are made manually are instantly reverted to the desired configuration.

Git ops allows for faster continuous delivery and for quicker automated remediation of infrastructure changes.

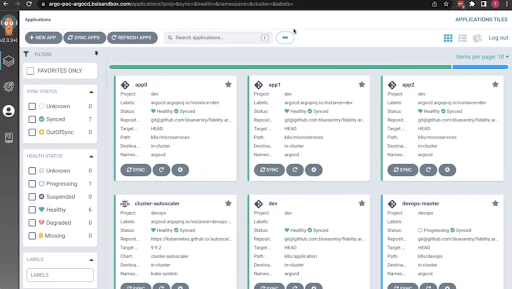

Here’s a sample deployment of Argo CD inside of EKS where we have a few different applications that have been deployed along with a few services that help the EKS cluster run:

If I pop into app zero and I just go ahead and delete an entire deployment, we will watch all of that disappear and in under two seconds.

We see the entire thing come back up and if I were to go to that application to see if it is still healthy:

We can see that it is still healthy.

Container orchestration tools in the cloud such as EKS and Fargate are great resources for longer-running processes or resource-intensive workloads.

With the get Ops model assuring the same exact architecture in lower environments and prod it allows for far fewer issues with deployments.

Container orchestration also allows for a much quicker deployment and feedback model for your development teams.

Contact Blue Sentry Cloud for Cloud Strategy Services

Blue Sentry Cloud is the country’s most densely certified Cloud firm and we would like to serve you.