Blue Sentry Cloud AWS Web Talk: What is Desktop and Application Streaming in AWS?

Transcript:

Hi, I’m Todd Bernson – CTO at Blue Sentry Cloud and an AWS Ambassador with 12 AWS certifications.

I’m often asked by Executives to explain Cloud native architectures so I’ve put together a multi-part series explaining common patterns and Technical jargon like container orchestration streaming applications and event-driven architectures.

In this installment, I’ll be breaking down another common Cloud-native application architecture.

Streaming applications are a fairly new architecture.

Streaming was introduced in 2013 to AWS as AWS Kinesis.

This allowed Cloud users to ingest enormous amounts of data in near real-time, store it, and run processing such as ETL tasks on it.

AWS has added to their streaming services with Kinesis Fire Host and managed Kafka service or MSK.

Now users have the ability to ETL data on the fly for faster analytics when time and scale are of the utmost importance.

Ingestion of huge amounts of data in near real-time such as stock market information, sports statistics, or social media content from hundreds of millions of sources is now possible with services like Kinesis, managed Kafka on AWS, DynamoDB, and S3.

To show how simple this process is I’ve created a simple AWS CLI producer and consumer.

Normally this is written in Java using AWS’s Java Kinesis producer library or KPL.

We’re going to be using something much simpler. You can view and replicate this using the git repo in the comments.

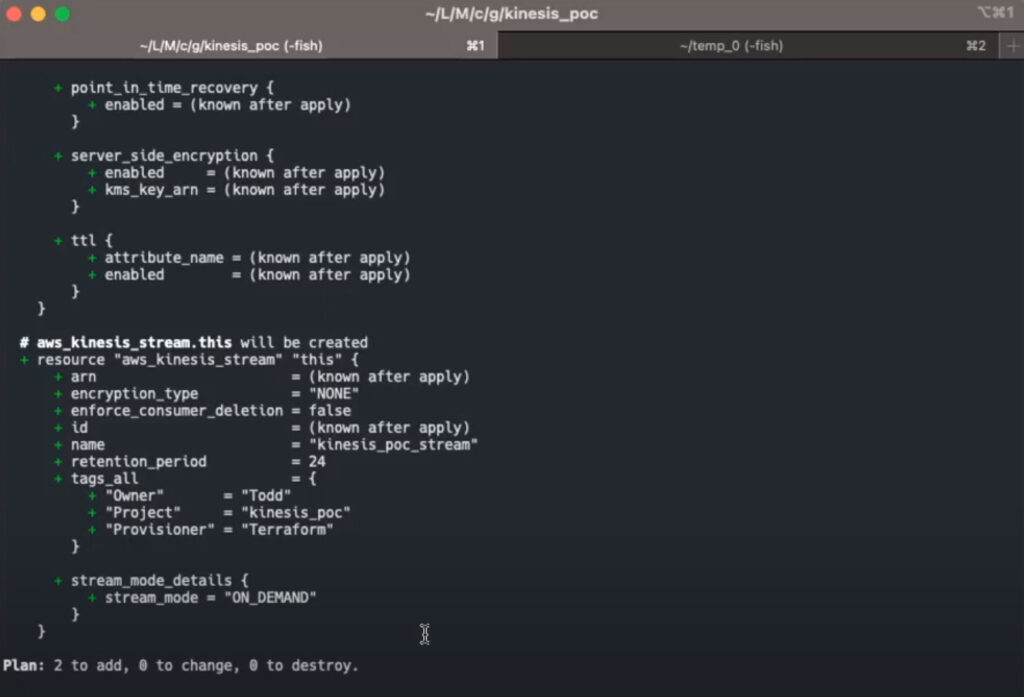

I’ve used Terraform to create a Kinesis stream and DynamoDB table.

We can see here that we’re going to add two resources – we’re going to add the DynamoDB table and the Kinesis stream.

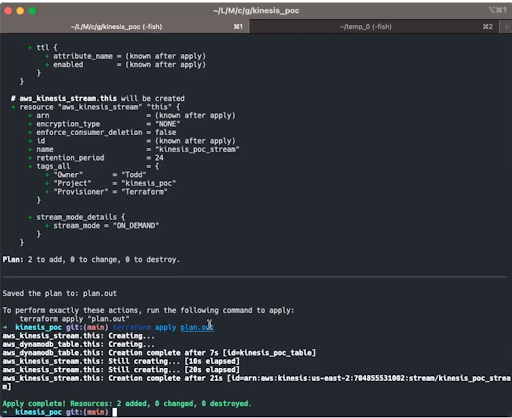

I’m going to apply this plan and fast-forward the video to where the application is complete.

There we have it our application is complete:

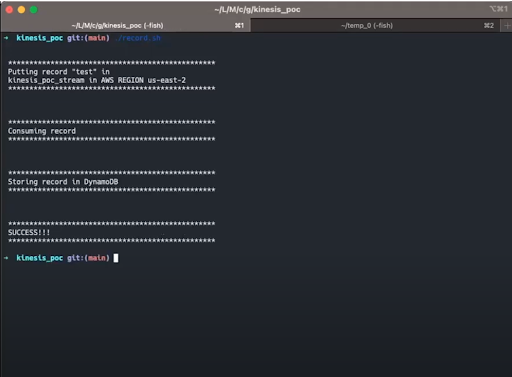

Now we can run a simple shell script to put a record into the stream, we can grab that record from the stream, and we can store that in our NoSQL database, DynamoDB.

We’re going to run this shell script now:

As we’re doing this, we’re going to put a record into the stream, we’re going to consume that record, and we’re going to store that record into DynamoDB, and we can see that we have success in a very very short period of time.

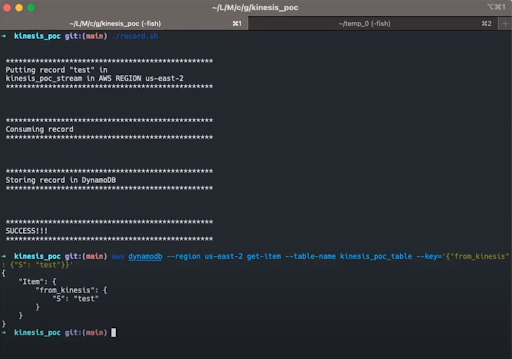

And finally, we can query that database and get our results.

This query command is in the git repo in the readme.

We will run that and immediately get our response from the database showing our test record.

We have put one test record in but this could be thousands or even millions of records per second.

ETL analysis or anything needed for your application can start immediately.

Streaming has allowed for a completely new and innovative way for data to be ingested either synchronously or asynchronously.

And with the power of AWS speed, scaling, and storage of that data is incredibly simple and secure.

Contact Blue Sentry Cloud for Cloud Strategy Services

Blue Sentry Cloud is the country’s most densely certified Cloud firm and we would like to serve you.